All Categories

Featured

Table of Contents

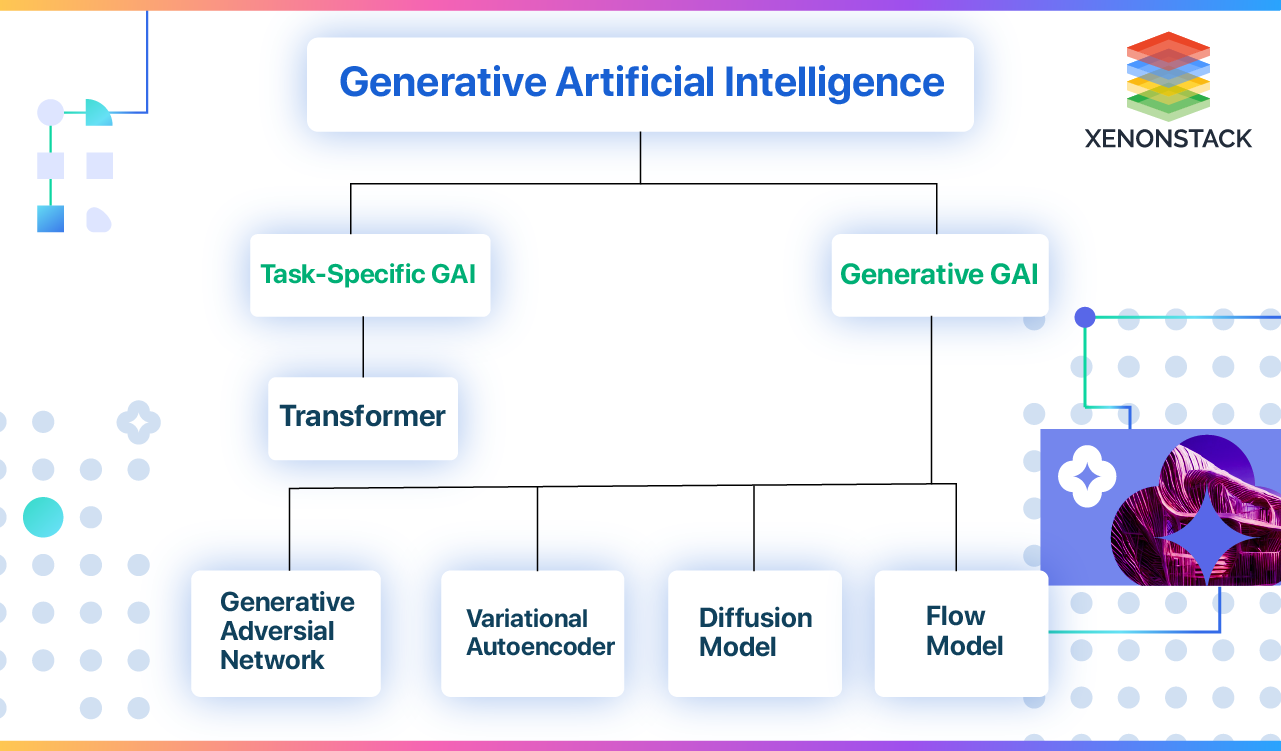

Generative AI has service applications beyond those covered by discriminative versions. Various formulas and related models have been established and educated to develop brand-new, reasonable content from existing data.

A generative adversarial network or GAN is a maker knowing framework that puts both semantic networks generator and discriminator against each other, hence the "adversarial" component. The competition in between them is a zero-sum video game, where one representative's gain is an additional representative's loss. GANs were designed by Jan Goodfellow and his coworkers at the University of Montreal in 2014.

The closer the outcome to 0, the much more most likely the outcome will certainly be phony. The other way around, numbers closer to 1 reveal a greater possibility of the prediction being genuine. Both a generator and a discriminator are commonly carried out as CNNs (Convolutional Neural Networks), specifically when working with photos. The adversarial nature of GANs exists in a game theoretic scenario in which the generator network have to complete versus the opponent.

Ai In Healthcare

Its foe, the discriminator network, attempts to distinguish in between examples attracted from the training data and those attracted from the generator - How do AI startups get funded?. GANs will certainly be considered effective when a generator develops a fake example that is so persuading that it can fool a discriminator and people.

Repeat. It finds out to find patterns in sequential information like created text or spoken language. Based on the context, the version can predict the next component of the collection, for instance, the next word in a sentence.

How Does Ai Simulate Human Behavior?

A vector stands for the semantic attributes of a word, with comparable words having vectors that are close in value. The word crown could be stood for by the vector [ 3,103,35], while apple could be [6,7,17], and pear could resemble [6.5,6,18] Of training course, these vectors are just illustrative; the real ones have a lot more dimensions.

So, at this stage, details regarding the position of each token within a series is included in the form of another vector, which is summarized with an input embedding. The result is a vector showing words's initial meaning and setting in the sentence. It's after that fed to the transformer neural network, which contains 2 blocks.

Mathematically, the relations between words in a phrase appear like ranges and angles between vectors in a multidimensional vector room. This system has the ability to identify subtle means even remote information components in a series impact and depend on each various other. For example, in the sentences I put water from the pitcher right into the mug up until it was complete and I put water from the bottle right into the cup up until it was empty, a self-attention mechanism can differentiate the meaning of it: In the previous case, the pronoun describes the cup, in the latter to the pitcher.

is utilized at the end to calculate the likelihood of different results and pick the most potential alternative. Then the generated result is added to the input, and the entire procedure repeats itself. The diffusion version is a generative design that creates brand-new data, such as images or noises, by mimicking the information on which it was educated

Assume of the diffusion version as an artist-restorer who examined paints by old masters and now can repaint their canvases in the same style. The diffusion model does about the very same thing in three major stages.gradually introduces sound right into the initial image until the outcome is merely a chaotic set of pixels.

If we go back to our analogy of the artist-restorer, straight diffusion is dealt with by time, covering the painting with a network of splits, dust, and oil; often, the paint is remodelled, including certain details and removing others. is like researching a painting to grasp the old master's initial intent. What is edge computing in AI?. The version carefully analyzes exactly how the added sound modifies the data

How Does Ai Work?

This understanding enables the version to successfully turn around the process in the future. After learning, this model can reconstruct the distorted information through the process called. It begins with a noise sample and eliminates the blurs step by stepthe very same means our artist does away with contaminants and later paint layering.

Hidden representations consist of the essential elements of information, permitting the model to restore the initial information from this inscribed significance. If you transform the DNA particle simply a little bit, you obtain a totally different microorganism.

Autonomous Vehicles

State, the lady in the second leading right photo looks a bit like Beyonc yet, at the very same time, we can see that it's not the pop singer. As the name recommends, generative AI changes one kind of picture into an additional. There is a range of image-to-image translation variants. This job includes removing the style from a famous painting and using it to another photo.

The outcome of utilizing Stable Diffusion on The results of all these programs are quite comparable. Nonetheless, some customers note that, on standard, Midjourney attracts a bit extra expressively, and Stable Diffusion follows the demand more plainly at default settings. Researchers have actually additionally made use of GANs to generate synthesized speech from text input.

Ai Technology

The major task is to do audio analysis and create "dynamic" soundtracks that can change depending upon just how users engage with them. That said, the music might change according to the ambience of the game scene or depending upon the strength of the customer's workout in the health club. Read our short article on discover more.

Rationally, videos can also be created and converted in much the very same means as pictures. While 2023 was noted by advancements in LLMs and a boom in image generation innovations, 2024 has seen significant improvements in video clip generation. At the beginning of 2024, OpenAI presented a truly remarkable text-to-video design called Sora. Sora is a diffusion-based model that generates video from static noise.

NVIDIA's Interactive AI Rendered Virtual WorldSuch synthetically developed data can assist establish self-driving automobiles as they can use created digital world training datasets for pedestrian discovery. Of training course, generative AI is no exemption.

Given that generative AI can self-learn, its habits is hard to manage. The results supplied can commonly be much from what you expect.

That's why so many are carrying out vibrant and intelligent conversational AI designs that consumers can communicate with through message or speech. In addition to consumer solution, AI chatbots can supplement advertising initiatives and assistance internal communications.

How Does Ai Contribute To Blockchain Technology?

That's why so numerous are implementing vibrant and intelligent conversational AI models that consumers can interact with through text or speech. In addition to client solution, AI chatbots can supplement marketing efforts and assistance internal interactions.

Latest Posts

Intelligent Virtual Assistants

How Does Deep Learning Differ From Ai?

Ai Startups To Watch